mirror of

https://github.com/netbirdio/netbird.git

synced 2026-04-01 15:14:03 -04:00

Compare commits

1 Commits

nb-interfa

...

set-comman

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

355bab9bb4 |

4

.github/workflows/git-town.yml

vendored

4

.github/workflows/git-town.yml

vendored

@@ -16,6 +16,6 @@ jobs:

|

||||

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- uses: git-town/action@v1.2.1

|

||||

- uses: git-town/action@v1

|

||||

with:

|

||||

skip-single-stacks: true

|

||||

skip-single-stacks: true

|

||||

@@ -43,7 +43,7 @@ jobs:

|

||||

- name: gomobile init

|

||||

run: gomobile init

|

||||

- name: build android netbird lib

|

||||

run: PATH=$PATH:$(go env GOPATH) gomobile bind -o $GITHUB_WORKSPACE/netbird.aar -javapkg=io.netbird.gomobile -ldflags="-checklinkname=0 -X golang.zx2c4.com/wireguard/ipc.socketDirectory=/data/data/io.netbird.client/cache/wireguard -X github.com/netbirdio/netbird/version.version=buildtest" $GITHUB_WORKSPACE/client/android

|

||||

run: PATH=$PATH:$(go env GOPATH) gomobile bind -o $GITHUB_WORKSPACE/netbird.aar -javapkg=io.netbird.gomobile -ldflags="-X golang.zx2c4.com/wireguard/ipc.socketDirectory=/data/data/io.netbird.client/cache/wireguard -X github.com/netbirdio/netbird/version.version=buildtest" $GITHUB_WORKSPACE/client/android

|

||||

env:

|

||||

CGO_ENABLED: 0

|

||||

ANDROID_NDK_HOME: /usr/local/lib/android/sdk/ndk/23.1.7779620

|

||||

|

||||

16

.github/workflows/release.yml

vendored

16

.github/workflows/release.yml

vendored

@@ -9,7 +9,7 @@ on:

|

||||

pull_request:

|

||||

|

||||

env:

|

||||

SIGN_PIPE_VER: "v0.0.21"

|

||||

SIGN_PIPE_VER: "v0.0.20"

|

||||

GORELEASER_VER: "v2.3.2"

|

||||

PRODUCT_NAME: "NetBird"

|

||||

COPYRIGHT: "NetBird GmbH"

|

||||

@@ -231,17 +231,3 @@ jobs:

|

||||

ref: ${{ env.SIGN_PIPE_VER }}

|

||||

token: ${{ secrets.SIGN_GITHUB_TOKEN }}

|

||||

inputs: '{ "tag": "${{ github.ref }}", "skipRelease": false }'

|

||||

|

||||

post_on_forum:

|

||||

runs-on: ubuntu-latest

|

||||

continue-on-error: true

|

||||

needs: [trigger_signer]

|

||||

steps:

|

||||

- uses: Codixer/discourse-topic-github-release-action@v2.0.1

|

||||

with:

|

||||

discourse-api-key: ${{ secrets.DISCOURSE_RELEASES_API_KEY }}

|

||||

discourse-base-url: https://forum.netbird.io

|

||||

discourse-author-username: NetBird

|

||||

discourse-category: 17

|

||||

discourse-tags:

|

||||

releases

|

||||

|

||||

48

README.md

48

README.md

@@ -14,9 +14,6 @@

|

||||

<br>

|

||||

<a href="https://docs.netbird.io/slack-url">

|

||||

<img src="https://img.shields.io/badge/slack-@netbird-red.svg?logo=slack"/>

|

||||

</a>

|

||||

<a href="https://forum.netbird.io">

|

||||

<img src="https://img.shields.io/badge/community forum-@netbird-red.svg?logo=discourse"/>

|

||||

</a>

|

||||

<br>

|

||||

<a href="https://gurubase.io/g/netbird">

|

||||

@@ -32,13 +29,13 @@

|

||||

<br/>

|

||||

See <a href="https://netbird.io/docs/">Documentation</a>

|

||||

<br/>

|

||||

Join our <a href="https://docs.netbird.io/slack-url">Slack channel</a> or our <a href="https://forum.netbird.io">Community forum</a>

|

||||

Join our <a href="https://docs.netbird.io/slack-url">Slack channel</a>

|

||||

<br/>

|

||||

|

||||

</strong>

|

||||

<br>

|

||||

<a href="https://registry.terraform.io/providers/netbirdio/netbird/latest">

|

||||

New: NetBird terraform provider

|

||||

<a href="https://github.com/netbirdio/kubernetes-operator">

|

||||

New: NetBird Kubernetes Operator

|

||||

</a>

|

||||

</p>

|

||||

|

||||

@@ -50,9 +47,10 @@

|

||||

|

||||

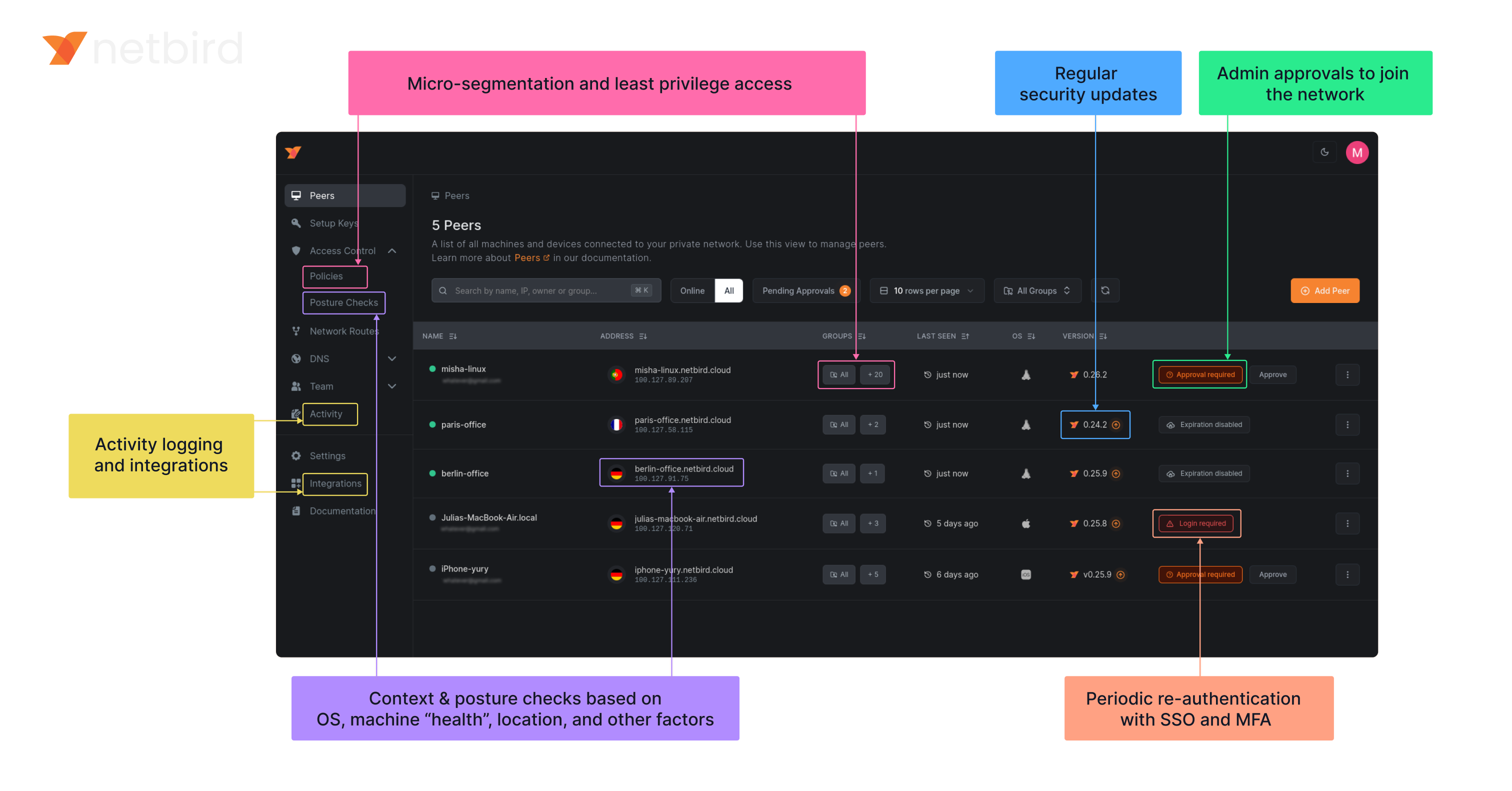

**Secure.** NetBird enables secure remote access by applying granular access policies while allowing you to manage them intuitively from a single place. Works universally on any infrastructure.

|

||||

|

||||

### Open Source Network Security in a Single Platform

|

||||

### Open-Source Network Security in a Single Platform

|

||||

|

||||

<img width="1188" alt="centralized-network-management 1" src="https://github.com/user-attachments/assets/c28cc8e4-15d2-4d2f-bb97-a6433db39d56" />

|

||||

|

||||

|

||||

|

||||

### NetBird on Lawrence Systems (Video)

|

||||

[](https://www.youtube.com/watch?v=Kwrff6h0rEw)

|

||||

@@ -136,3 +134,37 @@ We use open-source technologies like [WireGuard®](https://www.wireguard.com/),

|

||||

### Legal

|

||||

_WireGuard_ and the _WireGuard_ logo are [registered trademarks](https://www.wireguard.com/trademark-policy/) of Jason A. Donenfeld.

|

||||

|

||||

## Configuration Management

|

||||

|

||||

Netbird now supports direct configuration management via CLI commands:

|

||||

|

||||

- You can use `netbird set` as a regular user if the daemon is running; it will securely update the config via the daemon.

|

||||

- If the daemon is not running, you need write access to the config file (typically requires root).

|

||||

|

||||

### Set a configuration value

|

||||

|

||||

```

|

||||

netbird set <setting> <value>

|

||||

# or using environment variables

|

||||

NB_INTERFACE_NAME=utun5 netbird set interface-name

|

||||

```

|

||||

|

||||

### Get a configuration value

|

||||

|

||||

```

|

||||

netbird get <setting>

|

||||

# or using environment variables

|

||||

NB_INTERFACE_NAME=utun5 netbird get interface-name

|

||||

```

|

||||

|

||||

### Show all configuration values

|

||||

|

||||

```

|

||||

netbird show

|

||||

```

|

||||

|

||||

- All settings support environment variable overrides: `NB_<SETTING>` or `WT_<SETTING>` (e.g. `NB_ENABLE_ROSENPASS=true`).

|

||||

- Supported settings: management-url, admin-url, interface-name, external-ip-map, extra-iface-blacklist, dns-resolver-address, extra-dns-labels, preshared-key, enable-rosenpass, rosenpass-permissive, allow-server-ssh, network-monitor, disable-auto-connect, disable-client-routes, disable-server-routes, disable-dns, disable-firewall, block-lan-access, block-inbound, enable-lazy-connection, wireguard-port, dns-router-interval.

|

||||

|

||||

See `netbird set --help`, `netbird get --help`, and `netbird show --help` for more details.

|

||||

|

||||

|

||||

@@ -64,9 +64,7 @@ type Client struct {

|

||||

}

|

||||

|

||||

// NewClient instantiate a new Client

|

||||

func NewClient(cfgFile string, androidSDKVersion int, deviceName string, uiVersion string, tunAdapter TunAdapter, iFaceDiscover IFaceDiscover, networkChangeListener NetworkChangeListener) *Client {

|

||||

execWorkaround(androidSDKVersion)

|

||||

|

||||

func NewClient(cfgFile, deviceName string, uiVersion string, tunAdapter TunAdapter, iFaceDiscover IFaceDiscover, networkChangeListener NetworkChangeListener) *Client {

|

||||

net.SetAndroidProtectSocketFn(tunAdapter.ProtectSocket)

|

||||

return &Client{

|

||||

cfgFile: cfgFile,

|

||||

@@ -205,10 +203,8 @@ func (c *Client) Networks() *NetworkArray {

|

||||

continue

|

||||

}

|

||||

|

||||

r := routes[0]

|

||||

netStr := r.Network.String()

|

||||

if r.IsDynamic() {

|

||||

netStr = r.Domains.SafeString()

|

||||

if routes[0].IsDynamic() {

|

||||

continue

|

||||

}

|

||||

|

||||

peer, err := c.recorder.GetPeer(routes[0].Peer)

|

||||

@@ -218,7 +214,7 @@ func (c *Client) Networks() *NetworkArray {

|

||||

}

|

||||

network := Network{

|

||||

Name: string(id),

|

||||

Network: netStr,

|

||||

Network: routes[0].Network.String(),

|

||||

Peer: peer.FQDN,

|

||||

Status: peer.ConnStatus.String(),

|

||||

}

|

||||

|

||||

@@ -1,26 +0,0 @@

|

||||

//go:build android

|

||||

|

||||

package android

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

_ "unsafe"

|

||||

)

|

||||

|

||||

// https://github.com/golang/go/pull/69543/commits/aad6b3b32c81795f86bc4a9e81aad94899daf520

|

||||

// In Android version 11 and earlier, pidfd-related system calls

|

||||

// are not allowed by the seccomp policy, which causes crashes due

|

||||

// to SIGSYS signals.

|

||||

|

||||

//go:linkname checkPidfdOnce os.checkPidfdOnce

|

||||

var checkPidfdOnce func() error

|

||||

|

||||

func execWorkaround(androidSDKVersion int) {

|

||||

if androidSDKVersion > 30 { // above Android 11

|

||||

return

|

||||

}

|

||||

|

||||

checkPidfdOnce = func() error {

|

||||

return fmt.Errorf("unsupported Android version")

|

||||

}

|

||||

}

|

||||

@@ -17,18 +17,10 @@ import (

|

||||

"github.com/netbirdio/netbird/client/server"

|

||||

nbstatus "github.com/netbirdio/netbird/client/status"

|

||||

mgmProto "github.com/netbirdio/netbird/management/proto"

|

||||

"github.com/netbirdio/netbird/upload-server/types"

|

||||

)

|

||||

|

||||

const errCloseConnection = "Failed to close connection: %v"

|

||||

|

||||

var (

|

||||

logFileCount uint32

|

||||

systemInfoFlag bool

|

||||

uploadBundleFlag bool

|

||||

uploadBundleURLFlag string

|

||||

)

|

||||

|

||||

var debugCmd = &cobra.Command{

|

||||

Use: "debug",

|

||||

Short: "Debugging commands",

|

||||

@@ -96,13 +88,12 @@ func debugBundle(cmd *cobra.Command, _ []string) error {

|

||||

|

||||

client := proto.NewDaemonServiceClient(conn)

|

||||

request := &proto.DebugBundleRequest{

|

||||

Anonymize: anonymizeFlag,

|

||||

Status: getStatusOutput(cmd, anonymizeFlag),

|

||||

SystemInfo: systemInfoFlag,

|

||||

LogFileCount: logFileCount,

|

||||

Anonymize: anonymizeFlag,

|

||||

Status: getStatusOutput(cmd, anonymizeFlag),

|

||||

SystemInfo: debugSystemInfoFlag,

|

||||

}

|

||||

if uploadBundleFlag {

|

||||

request.UploadURL = uploadBundleURLFlag

|

||||

if debugUploadBundle {

|

||||

request.UploadURL = debugUploadBundleURL

|

||||

}

|

||||

resp, err := client.DebugBundle(cmd.Context(), request)

|

||||

if err != nil {

|

||||

@@ -114,7 +105,7 @@ func debugBundle(cmd *cobra.Command, _ []string) error {

|

||||

return fmt.Errorf("upload failed: %s", resp.GetUploadFailureReason())

|

||||

}

|

||||

|

||||

if uploadBundleFlag {

|

||||

if debugUploadBundle {

|

||||

cmd.Printf("Upload file key:\n%s\n", resp.GetUploadedKey())

|

||||

}

|

||||

|

||||

@@ -232,13 +223,12 @@ func runForDuration(cmd *cobra.Command, args []string) error {

|

||||

headerPreDown := fmt.Sprintf("----- Netbird pre-down - Timestamp: %s - Duration: %s", time.Now().Format(time.RFC3339), duration)

|

||||

statusOutput = fmt.Sprintf("%s\n%s\n%s", statusOutput, headerPreDown, getStatusOutput(cmd, anonymizeFlag))

|

||||

request := &proto.DebugBundleRequest{

|

||||

Anonymize: anonymizeFlag,

|

||||

Status: statusOutput,

|

||||

SystemInfo: systemInfoFlag,

|

||||

LogFileCount: logFileCount,

|

||||

Anonymize: anonymizeFlag,

|

||||

Status: statusOutput,

|

||||

SystemInfo: debugSystemInfoFlag,

|

||||

}

|

||||

if uploadBundleFlag {

|

||||

request.UploadURL = uploadBundleURLFlag

|

||||

if debugUploadBundle {

|

||||

request.UploadURL = debugUploadBundleURL

|

||||

}

|

||||

resp, err := client.DebugBundle(cmd.Context(), request)

|

||||

if err != nil {

|

||||

@@ -265,7 +255,7 @@ func runForDuration(cmd *cobra.Command, args []string) error {

|

||||

return fmt.Errorf("upload failed: %s", resp.GetUploadFailureReason())

|

||||

}

|

||||

|

||||

if uploadBundleFlag {

|

||||

if debugUploadBundle {

|

||||

cmd.Printf("Upload file key:\n%s\n", resp.GetUploadedKey())

|

||||

}

|

||||

|

||||

@@ -307,7 +297,7 @@ func getStatusOutput(cmd *cobra.Command, anon bool) string {

|

||||

cmd.PrintErrf("Failed to get status: %v\n", err)

|

||||

} else {

|

||||

statusOutputString = nbstatus.ParseToFullDetailSummary(

|

||||

nbstatus.ConvertToStatusOutputOverview(statusResp, anon, "", nil, nil, nil, ""),

|

||||

nbstatus.ConvertToStatusOutputOverview(statusResp, anon, "", nil, nil, nil),

|

||||

)

|

||||

}

|

||||

return statusOutputString

|

||||

@@ -385,15 +375,3 @@ func generateDebugBundle(config *internal.Config, recorder *peer.Status, connect

|

||||

}

|

||||

log.Infof("Generated debug bundle from SIGUSR1 at: %s", path)

|

||||

}

|

||||

|

||||

func init() {

|

||||

debugBundleCmd.Flags().Uint32VarP(&logFileCount, "log-file-count", "C", 1, "Number of rotated log files to include in debug bundle")

|

||||

debugBundleCmd.Flags().BoolVarP(&systemInfoFlag, "system-info", "S", true, "Adds system information to the debug bundle")

|

||||

debugBundleCmd.Flags().BoolVarP(&uploadBundleFlag, "upload-bundle", "U", false, "Uploads the debug bundle to a server")

|

||||

debugBundleCmd.Flags().StringVar(&uploadBundleURLFlag, "upload-bundle-url", types.DefaultBundleURL, "Service URL to get an URL to upload the debug bundle")

|

||||

|

||||

forCmd.Flags().Uint32VarP(&logFileCount, "log-file-count", "C", 1, "Number of rotated log files to include in debug bundle")

|

||||

forCmd.Flags().BoolVarP(&systemInfoFlag, "system-info", "S", true, "Adds system information to the debug bundle")

|

||||

forCmd.Flags().BoolVarP(&uploadBundleFlag, "upload-bundle", "U", false, "Uploads the debug bundle to a server")

|

||||

forCmd.Flags().StringVar(&uploadBundleURLFlag, "upload-bundle-url", types.DefaultBundleURL, "Service URL to get an URL to upload the debug bundle")

|

||||

}

|

||||

|

||||

@@ -22,6 +22,8 @@ import (

|

||||

"google.golang.org/grpc/credentials/insecure"

|

||||

|

||||

"github.com/netbirdio/netbird/client/internal"

|

||||

"github.com/netbirdio/netbird/client/proto"

|

||||

"github.com/netbirdio/netbird/upload-server/types"

|

||||

)

|

||||

|

||||

const (

|

||||

@@ -37,7 +39,10 @@ const (

|

||||

serverSSHAllowedFlag = "allow-server-ssh"

|

||||

extraIFaceBlackListFlag = "extra-iface-blacklist"

|

||||

dnsRouteIntervalFlag = "dns-router-interval"

|

||||

systemInfoFlag = "system-info"

|

||||

enableLazyConnectionFlag = "enable-lazy-connection"

|

||||

uploadBundle = "upload-bundle"

|

||||

uploadBundleURL = "upload-bundle-url"

|

||||

)

|

||||

|

||||

var (

|

||||

@@ -71,7 +76,10 @@ var (

|

||||

autoConnectDisabled bool

|

||||

extraIFaceBlackList []string

|

||||

anonymizeFlag bool

|

||||

debugSystemInfoFlag bool

|

||||

dnsRouteInterval time.Duration

|

||||

debugUploadBundle bool

|

||||

debugUploadBundleURL string

|

||||

lazyConnEnabled bool

|

||||

|

||||

rootCmd = &cobra.Command{

|

||||

@@ -80,6 +88,30 @@ var (

|

||||

Long: "",

|

||||

SilenceUsage: true,

|

||||

}

|

||||

|

||||

getCmd = &cobra.Command{

|

||||

Use: "get <setting>",

|

||||

Short: "Get a configuration value from the config file",

|

||||

Long: `Get a configuration value from the Netbird config file. You can also use NB_<SETTING> or WT_<SETTING> environment variables to override the value (same as 'set').`,

|

||||

Args: cobra.ExactArgs(1),

|

||||

RunE: getFunc,

|

||||

}

|

||||

|

||||

showCmd = &cobra.Command{

|

||||

Use: "show",

|

||||

Short: "Show all configuration values",

|

||||

Long: `Show all configuration values from the Netbird config file, with environment variable overrides if present.`,

|

||||

Args: cobra.NoArgs,

|

||||

RunE: showFunc,

|

||||

}

|

||||

|

||||

reloadCmd = &cobra.Command{

|

||||

Use: "reload",

|

||||

Short: "Reload the configuration in the daemon (daemon mode)",

|

||||

Long: `Reload the configuration from disk in the running daemon. Use after 'set' to apply changes without restarting the service.`,

|

||||

Args: cobra.NoArgs,

|

||||

RunE: reloadFunc,

|

||||

}

|

||||

)

|

||||

|

||||

// Execute executes the root command.

|

||||

@@ -145,6 +177,9 @@ func init() {

|

||||

rootCmd.AddCommand(networksCMD)

|

||||

rootCmd.AddCommand(forwardingRulesCmd)

|

||||

rootCmd.AddCommand(debugCmd)

|

||||

rootCmd.AddCommand(getCmd)

|

||||

rootCmd.AddCommand(showCmd)

|

||||

rootCmd.AddCommand(reloadCmd)

|

||||

|

||||

serviceCmd.AddCommand(runCmd, startCmd, stopCmd, restartCmd) // service control commands are subcommands of service

|

||||

serviceCmd.AddCommand(installCmd, uninstallCmd) // service installer commands are subcommands of service

|

||||

@@ -177,8 +212,11 @@ func init() {

|

||||

upCmd.PersistentFlags().BoolVar(&rosenpassPermissive, rosenpassPermissiveFlag, false, "[Experimental] Enable Rosenpass in permissive mode to allow this peer to accept WireGuard connections without requiring Rosenpass functionality from peers that do not have Rosenpass enabled.")

|

||||

upCmd.PersistentFlags().BoolVar(&serverSSHAllowed, serverSSHAllowedFlag, false, "Allow SSH server on peer. If enabled, the SSH server will be permitted")

|

||||

upCmd.PersistentFlags().BoolVar(&autoConnectDisabled, disableAutoConnectFlag, false, "Disables auto-connect feature. If enabled, then the client won't connect automatically when the service starts.")

|

||||

upCmd.PersistentFlags().BoolVar(&lazyConnEnabled, enableLazyConnectionFlag, false, "[Experimental] Enable the lazy connection feature. If enabled, the client will establish connections on-demand. Note: this setting may be overridden by management configuration.")

|

||||

upCmd.PersistentFlags().BoolVar(&lazyConnEnabled, enableLazyConnectionFlag, false, "[Experimental] Enable the lazy connection feature. If enabled, the client will establish connections on-demand.")

|

||||

|

||||

debugCmd.PersistentFlags().BoolVarP(&debugSystemInfoFlag, systemInfoFlag, "S", true, "Adds system information to the debug bundle")

|

||||

debugCmd.PersistentFlags().BoolVarP(&debugUploadBundle, uploadBundle, "U", false, fmt.Sprintf("Uploads the debug bundle to a server from URL defined by %s", uploadBundleURL))

|

||||

debugCmd.PersistentFlags().StringVar(&debugUploadBundleURL, uploadBundleURL, types.DefaultBundleURL, "Service URL to get an URL to upload the debug bundle")

|

||||

}

|

||||

|

||||

// SetupCloseHandler handles SIGTERM signal and exits with success

|

||||

@@ -398,3 +436,167 @@ func getClient(cmd *cobra.Command) (*grpc.ClientConn, error) {

|

||||

|

||||

return conn, nil

|

||||

}

|

||||

|

||||

func getFunc(cmd *cobra.Command, args []string) error {

|

||||

setting := args[0]

|

||||

upper := strings.ToUpper(strings.ReplaceAll(setting, "-", "_"))

|

||||

if v, ok := os.LookupEnv("NB_" + upper); ok {

|

||||

cmd.Println(v)

|

||||

return nil

|

||||

} else if v, ok := os.LookupEnv("WT_" + upper); ok {

|

||||

cmd.Println(v)

|

||||

return nil

|

||||

}

|

||||

config, err := internal.ReadConfig(configPath)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to read config: %v", err)

|

||||

}

|

||||

switch setting {

|

||||

case "management-url":

|

||||

cmd.Println(config.ManagementURL.String())

|

||||

case "admin-url":

|

||||

cmd.Println(config.AdminURL.String())

|

||||

case "interface-name":

|

||||

cmd.Println(config.WgIface)

|

||||

case "external-ip-map":

|

||||

cmd.Println(strings.Join(config.NATExternalIPs, ","))

|

||||

case "extra-iface-blacklist":

|

||||

cmd.Println(strings.Join(config.IFaceBlackList, ","))

|

||||

case "dns-resolver-address":

|

||||

cmd.Println(config.CustomDNSAddress)

|

||||

case "extra-dns-labels":

|

||||

cmd.Println(config.DNSLabels.SafeString())

|

||||

case "preshared-key":

|

||||

cmd.Println(config.PreSharedKey)

|

||||

case "enable-rosenpass":

|

||||

cmd.Println(config.RosenpassEnabled)

|

||||

case "rosenpass-permissive":

|

||||

cmd.Println(config.RosenpassPermissive)

|

||||

case "allow-server-ssh":

|

||||

if config.ServerSSHAllowed != nil {

|

||||

cmd.Println(*config.ServerSSHAllowed)

|

||||

} else {

|

||||

cmd.Println(false)

|

||||

}

|

||||

case "network-monitor":

|

||||

if config.NetworkMonitor != nil {

|

||||

cmd.Println(*config.NetworkMonitor)

|

||||

} else {

|

||||

cmd.Println(false)

|

||||

}

|

||||

case "disable-auto-connect":

|

||||

cmd.Println(config.DisableAutoConnect)

|

||||

case "disable-client-routes":

|

||||

cmd.Println(config.DisableClientRoutes)

|

||||

case "disable-server-routes":

|

||||

cmd.Println(config.DisableServerRoutes)

|

||||

case "disable-dns":

|

||||

cmd.Println(config.DisableDNS)

|

||||

case "disable-firewall":

|

||||

cmd.Println(config.DisableFirewall)

|

||||

case "block-lan-access":

|

||||

cmd.Println(config.BlockLANAccess)

|

||||

case "block-inbound":

|

||||

cmd.Println(config.BlockInbound)

|

||||

case "enable-lazy-connection":

|

||||

cmd.Println(config.LazyConnectionEnabled)

|

||||

case "wireguard-port":

|

||||

cmd.Println(config.WgPort)

|

||||

case "dns-router-interval":

|

||||

cmd.Println(config.DNSRouteInterval)

|

||||

default:

|

||||

return fmt.Errorf("unknown setting: %s", setting)

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func showFunc(cmd *cobra.Command, args []string) error {

|

||||

config, err := internal.ReadConfig(configPath)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to read config: %v", err)

|

||||

}

|

||||

settings := []string{

|

||||

"management-url", "admin-url", "interface-name", "external-ip-map", "extra-iface-blacklist", "dns-resolver-address", "extra-dns-labels", "preshared-key", "enable-rosenpass", "rosenpass-permissive", "allow-server-ssh", "network-monitor", "disable-auto-connect", "disable-client-routes", "disable-server-routes", "disable-dns", "disable-firewall", "block-lan-access", "block-inbound", "enable-lazy-connection", "wireguard-port", "dns-router-interval",

|

||||

}

|

||||

for _, setting := range settings {

|

||||

upper := strings.ToUpper(strings.ReplaceAll(setting, "-", "_"))

|

||||

var val string

|

||||

if v, ok := os.LookupEnv("NB_" + upper); ok {

|

||||

val = v + " (from NB_ env)"

|

||||

} else if v, ok := os.LookupEnv("WT_" + upper); ok {

|

||||

val = v + " (from WT_ env)"

|

||||

} else {

|

||||

switch setting {

|

||||

case "management-url":

|

||||

val = config.ManagementURL.String()

|

||||

case "admin-url":

|

||||

val = config.AdminURL.String()

|

||||

case "interface-name":

|

||||

val = config.WgIface

|

||||

case "external-ip-map":

|

||||

val = strings.Join(config.NATExternalIPs, ",")

|

||||

case "extra-iface-blacklist":

|

||||

val = strings.Join(config.IFaceBlackList, ",")

|

||||

case "dns-resolver-address":

|

||||

val = config.CustomDNSAddress

|

||||

case "extra-dns-labels":

|

||||

val = config.DNSLabels.SafeString()

|

||||

case "preshared-key":

|

||||

val = config.PreSharedKey

|

||||

case "enable-rosenpass":

|

||||

val = fmt.Sprintf("%v", config.RosenpassEnabled)

|

||||

case "rosenpass-permissive":

|

||||

val = fmt.Sprintf("%v", config.RosenpassPermissive)

|

||||

case "allow-server-ssh":

|

||||

if config.ServerSSHAllowed != nil {

|

||||

val = fmt.Sprintf("%v", *config.ServerSSHAllowed)

|

||||

} else {

|

||||

val = "false"

|

||||

}

|

||||

case "network-monitor":

|

||||

if config.NetworkMonitor != nil {

|

||||

val = fmt.Sprintf("%v", *config.NetworkMonitor)

|

||||

} else {

|

||||

val = "false"

|

||||

}

|

||||

case "disable-auto-connect":

|

||||

val = fmt.Sprintf("%v", config.DisableAutoConnect)

|

||||

case "disable-client-routes":

|

||||

val = fmt.Sprintf("%v", config.DisableClientRoutes)

|

||||

case "disable-server-routes":

|

||||

val = fmt.Sprintf("%v", config.DisableServerRoutes)

|

||||

case "disable-dns":

|

||||

val = fmt.Sprintf("%v", config.DisableDNS)

|

||||

case "disable-firewall":

|

||||

val = fmt.Sprintf("%v", config.DisableFirewall)

|

||||

case "block-lan-access":

|

||||

val = fmt.Sprintf("%v", config.BlockLANAccess)

|

||||

case "block-inbound":

|

||||

val = fmt.Sprintf("%v", config.BlockInbound)

|

||||

case "enable-lazy-connection":

|

||||

val = fmt.Sprintf("%v", config.LazyConnectionEnabled)

|

||||

case "wireguard-port":

|

||||

val = fmt.Sprintf("%d", config.WgPort)

|

||||

case "dns-router-interval":

|

||||

val = config.DNSRouteInterval.String()

|

||||

}

|

||||

}

|

||||

cmd.Printf("%-22s: %s\n", setting, val)

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func reloadFunc(cmd *cobra.Command, args []string) error {

|

||||

conn, err := getClient(cmd)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

defer conn.Close()

|

||||

client := proto.NewDaemonServiceClient(conn)

|

||||

_, err = client.ReloadConfig(cmd.Context(), &proto.ReloadConfigRequest{})

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to reload config in daemon: %v", err)

|

||||

}

|

||||

cmd.Println("Configuration reloaded in daemon.")

|

||||

return nil

|

||||

}

|

||||

|

||||

475

client/cmd/set.go

Normal file

475

client/cmd/set.go

Normal file

@@ -0,0 +1,475 @@

|

||||

package cmd

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"os"

|

||||

osuser "os/user"

|

||||

"strings"

|

||||

"time"

|

||||

|

||||

"github.com/netbirdio/netbird/client/internal"

|

||||

"github.com/netbirdio/netbird/client/proto"

|

||||

"github.com/netbirdio/netbird/management/domain"

|

||||

"github.com/spf13/cobra"

|

||||

"google.golang.org/grpc/status"

|

||||

)

|

||||

|

||||

var setCmd = &cobra.Command{

|

||||

Use: "set <setting> <value>",

|

||||

Short: "Set a configuration value without running up",

|

||||

Long: `Set a configuration value in the Netbird config file without running 'up'.

|

||||

|

||||

You can also set values via environment variables NB_<SETTING> or WT_<SETTING> (e.g. NB_INTERFACE_NAME=utun5 netbird set interface-name).

|

||||

|

||||

Supported settings:

|

||||

management-url (string) e.g. https://api.netbird.io:443

|

||||

admin-url (string) e.g. https://app.netbird.io:443

|

||||

interface-name (string) e.g. utun5

|

||||

external-ip-map (list) comma-separated, e.g. 12.34.56.78,12.34.56.79/eth0

|

||||

extra-iface-blacklist (list) comma-separated, e.g. eth1,eth2

|

||||

dns-resolver-address (string) e.g. 127.0.0.1:5053

|

||||

extra-dns-labels (list) comma-separated, e.g. vpc1,mgmt1

|

||||

preshared-key (string)

|

||||

enable-rosenpass (bool) true/false

|

||||

rosenpass-permissive (bool) true/false

|

||||

allow-server-ssh (bool) true/false

|

||||

network-monitor (bool) true/false

|

||||

disable-auto-connect (bool) true/false

|

||||

disable-client-routes (bool) true/false

|

||||

disable-server-routes (bool) true/false

|

||||

disable-dns (bool) true/false

|

||||

disable-firewall (bool) true/false

|

||||

block-lan-access (bool) true/false

|

||||

block-inbound (bool) true/false

|

||||

enable-lazy-connection (bool) true/false

|

||||

wireguard-port (int) e.g. 51820

|

||||

dns-router-interval (duration) e.g. 1m, 30s

|

||||

|

||||

Examples:

|

||||

NB_INTERFACE_NAME=utun5 netbird set interface-name

|

||||

netbird set wireguard-port 51820

|

||||

netbird set external-ip-map 12.34.56.78,12.34.56.79/eth0

|

||||

netbird set enable-rosenpass true

|

||||

netbird set dns-router-interval 2m

|

||||

netbird set extra-dns-labels vpc1,mgmt1

|

||||

netbird set disable-firewall true

|

||||

`,

|

||||

Args: cobra.ExactArgs(2),

|

||||

RunE: setFunc,

|

||||

}

|

||||

|

||||

func init() {

|

||||

rootCmd.AddCommand(setCmd)

|

||||

}

|

||||

|

||||

func setFunc(cmd *cobra.Command, args []string) error {

|

||||

setting := args[0]

|

||||

var value string

|

||||

|

||||

// Check environment variables first

|

||||

upper := strings.ToUpper(strings.ReplaceAll(setting, "-", "_"))

|

||||

if v, ok := os.LookupEnv("NB_" + upper); ok {

|

||||

value = v

|

||||

} else if v, ok := os.LookupEnv("WT_" + upper); ok {

|

||||

value = v

|

||||

} else {

|

||||

if len(args) < 2 {

|

||||

return fmt.Errorf("missing value for setting %s", setting)

|

||||

}

|

||||

value = args[1]

|

||||

}

|

||||

|

||||

// If not root, try to use the daemon (only if cmd is not nil)

|

||||

if cmd != nil {

|

||||

if u, err := osuser.Current(); err == nil && u.Uid != "0" {

|

||||

conn, err := getClient(cmd)

|

||||

if err == nil {

|

||||

defer conn.Close()

|

||||

client := proto.NewDaemonServiceClient(conn)

|

||||

_, err = client.SetConfigValue(cmd.Context(), &proto.SetConfigValueRequest{Setting: setting, Value: value})

|

||||

if err == nil {

|

||||

if cmd != nil {

|

||||

cmd.Println("Configuration updated via daemon.")

|

||||

} else {

|

||||

fmt.Println("Configuration updated via daemon.")

|

||||

}

|

||||

return nil

|

||||

}

|

||||

if s, ok := status.FromError(err); ok {

|

||||

return fmt.Errorf("daemon error: %v", s.Message())

|

||||

}

|

||||

return fmt.Errorf("failed to update config via daemon: %v", err)

|

||||

}

|

||||

// else: fall back to direct file write

|

||||

}

|

||||

}

|

||||

|

||||

switch setting {

|

||||

case "management-url":

|

||||

input := internal.ConfigInput{ConfigPath: configPath, ManagementURL: value}

|

||||

_, err := internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set management-url: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set management-url to: %s\n", value)

|

||||

} else {

|

||||

fmt.Printf("Set management-url to: %s\n", value)

|

||||

}

|

||||

case "admin-url":

|

||||

input := internal.ConfigInput{ConfigPath: configPath, AdminURL: value}

|

||||

_, err := internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set admin-url: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set admin-url to: %s\n", value)

|

||||

} else {

|

||||

fmt.Printf("Set admin-url to: %s\n", value)

|

||||

}

|

||||

case "interface-name":

|

||||

if err := parseInterfaceName(value); err != nil {

|

||||

return err

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, InterfaceName: &value}

|

||||

_, err := internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set interface-name: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set interface-name to: %s\n", value)

|

||||

} else {

|

||||

fmt.Printf("Set interface-name to: %s\n", value)

|

||||

}

|

||||

case "external-ip-map":

|

||||

var ips []string

|

||||

if value == "" {

|

||||

ips = []string{}

|

||||

} else {

|

||||

ips = strings.Split(value, ",")

|

||||

}

|

||||

if err := validateNATExternalIPs(ips); err != nil {

|

||||

return err

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, NATExternalIPs: ips}

|

||||

_, err := internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set external-ip-map: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set external-ip-map to: %v\n", ips)

|

||||

} else {

|

||||

fmt.Printf("Set external-ip-map to: %v\n", ips)

|

||||

}

|

||||

case "extra-iface-blacklist":

|

||||

var ifaces []string

|

||||

if value == "" {

|

||||

ifaces = []string{}

|

||||

} else {

|

||||

ifaces = strings.Split(value, ",")

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, ExtraIFaceBlackList: ifaces}

|

||||

_, err := internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set extra-iface-blacklist: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set extra-iface-blacklist to: %v\n", ifaces)

|

||||

} else {

|

||||

fmt.Printf("Set extra-iface-blacklist to: %v\n", ifaces)

|

||||

}

|

||||

case "dns-resolver-address":

|

||||

if value != "" && !isValidAddrPort(value) {

|

||||

return fmt.Errorf("%s is invalid, it should be formatted as IP:Port string or as an empty string like \"\"", value)

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, CustomDNSAddress: []byte(value)}

|

||||

_, err := internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set dns-resolver-address: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set dns-resolver-address to: %s\n", value)

|

||||

} else {

|

||||

fmt.Printf("Set dns-resolver-address to: %s\n", value)

|

||||

}

|

||||

case "extra-dns-labels":

|

||||

var labels []string

|

||||

if value == "" {

|

||||

labels = []string{}

|

||||

} else {

|

||||

labels = strings.Split(value, ",")

|

||||

}

|

||||

domains, err := domain.ValidateDomains(labels)

|

||||

if err != nil {

|

||||

return fmt.Errorf("invalid DNS labels: %v", err)

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, DNSLabels: domains}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set extra-dns-labels: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set extra-dns-labels to: %v\n", labels)

|

||||

} else {

|

||||

fmt.Printf("Set extra-dns-labels to: %v\n", labels)

|

||||

}

|

||||

case "preshared-key":

|

||||

input := internal.ConfigInput{ConfigPath: configPath, PreSharedKey: &value}

|

||||

_, err := internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set preshared-key: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set preshared-key to: %s\n", value)

|

||||

} else {

|

||||

fmt.Printf("Set preshared-key to: %s\n", value)

|

||||

}

|

||||

case "hostname":

|

||||

// Hostname is not persisted in config, so just print a warning

|

||||

if cmd != nil {

|

||||

cmd.Printf("Warning: hostname is not persisted in config. Use --hostname with up command.\n")

|

||||

} else {

|

||||

fmt.Printf("Warning: hostname is not persisted in config. Use --hostname with up command.\n")

|

||||

}

|

||||

case "enable-rosenpass":

|

||||

b, err := parseBool(value)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, RosenpassEnabled: &b}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set enable-rosenpass: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set enable-rosenpass to: %v\n", b)

|

||||

} else {

|

||||

fmt.Printf("Set enable-rosenpass to: %v\n", b)

|

||||

}

|

||||

case "rosenpass-permissive":

|

||||

b, err := parseBool(value)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, RosenpassPermissive: &b}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set rosenpass-permissive: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set rosenpass-permissive to: %v\n", b)

|

||||

} else {

|

||||

fmt.Printf("Set rosenpass-permissive to: %v\n", b)

|

||||

}

|

||||

case "allow-server-ssh":

|

||||

b, err := parseBool(value)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, ServerSSHAllowed: &b}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set allow-server-ssh: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set allow-server-ssh to: %v\n", b)

|

||||

} else {

|

||||

fmt.Printf("Set allow-server-ssh to: %v\n", b)

|

||||

}

|

||||

case "network-monitor":

|

||||

b, err := parseBool(value)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, NetworkMonitor: &b}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set network-monitor: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set network-monitor to: %v\n", b)

|

||||

} else {

|

||||

fmt.Printf("Set network-monitor to: %v\n", b)

|

||||

}

|

||||

case "disable-auto-connect":

|

||||

b, err := parseBool(value)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, DisableAutoConnect: &b}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set disable-auto-connect: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set disable-auto-connect to: %v\n", b)

|

||||

} else {

|

||||

fmt.Printf("Set disable-auto-connect to: %v\n", b)

|

||||

}

|

||||

case "disable-client-routes":

|

||||

b, err := parseBool(value)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, DisableClientRoutes: &b}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set disable-client-routes: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set disable-client-routes to: %v\n", b)

|

||||

} else {

|

||||

fmt.Printf("Set disable-client-routes to: %v\n", b)

|

||||

}

|

||||

case "disable-server-routes":

|

||||

b, err := parseBool(value)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, DisableServerRoutes: &b}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set disable-server-routes: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set disable-server-routes to: %v\n", b)

|

||||

} else {

|

||||

fmt.Printf("Set disable-server-routes to: %v\n", b)

|

||||

}

|

||||

case "disable-dns":

|

||||

b, err := parseBool(value)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, DisableDNS: &b}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set disable-dns: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set disable-dns to: %v\n", b)

|

||||

} else {

|

||||

fmt.Printf("Set disable-dns to: %v\n", b)

|

||||

}

|

||||

case "disable-firewall":

|

||||

b, err := parseBool(value)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, DisableFirewall: &b}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set disable-firewall: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set disable-firewall to: %v\n", b)

|

||||

} else {

|

||||

fmt.Printf("Set disable-firewall to: %v\n", b)

|

||||

}

|

||||

case "block-lan-access":

|

||||

b, err := parseBool(value)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, BlockLANAccess: &b}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set block-lan-access: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set block-lan-access to: %v\n", b)

|

||||

} else {

|

||||

fmt.Printf("Set block-lan-access to: %v\n", b)

|

||||

}

|

||||

case "block-inbound":

|

||||

b, err := parseBool(value)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, BlockInbound: &b}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set block-inbound: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set block-inbound to: %v\n", b)

|

||||

} else {

|

||||

fmt.Printf("Set block-inbound to: %v\n", b)

|

||||

}

|

||||

case "enable-lazy-connection":

|

||||

b, err := parseBool(value)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, LazyConnectionEnabled: &b}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set enable-lazy-connection: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set enable-lazy-connection to: %v\n", b)

|

||||

} else {

|

||||

fmt.Printf("Set enable-lazy-connection to: %v\n", b)

|

||||

}

|

||||

case "wireguard-port":

|

||||

p, err := parseUint16(value)

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

pi := int(p)

|

||||

input := internal.ConfigInput{ConfigPath: configPath, WireguardPort: &pi}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set wireguard-port: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set wireguard-port to: %d\n", p)

|

||||

} else {

|

||||

fmt.Printf("Set wireguard-port to: %d\n", p)

|

||||

}

|

||||

case "dns-router-interval":

|

||||

d, err := time.ParseDuration(value)

|

||||

if err != nil {

|

||||

return fmt.Errorf("invalid duration: %v", err)

|

||||

}

|

||||

input := internal.ConfigInput{ConfigPath: configPath, DNSRouteInterval: &d}

|

||||

_, err = internal.UpdateOrCreateConfig(input)

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to set dns-router-interval: %v", err)

|

||||

}

|

||||

if cmd != nil {

|

||||

cmd.Printf("Set dns-router-interval to: %s\n", d)

|

||||

} else {

|

||||

fmt.Printf("Set dns-router-interval to: %s\n", d)

|

||||

}

|

||||

default:

|

||||

return fmt.Errorf("unknown setting: %s", setting)

|

||||

}

|

||||

|

||||

if cmd != nil {

|

||||

cmd.Println("Configuration updated successfully.")

|

||||

} else {

|

||||

fmt.Println("Configuration updated successfully.")

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func parseBool(val string) (bool, error) {

|

||||

v := strings.ToLower(val)

|

||||

if v == "true" || v == "1" {

|

||||

return true, nil

|

||||

}

|

||||

if v == "false" || v == "0" {

|

||||

return false, nil

|

||||

}

|

||||

return false, fmt.Errorf("invalid boolean value: %s", val)

|

||||

}

|

||||

|

||||

func parseUint16(val string) (uint16, error) {

|

||||

var p uint16

|

||||

_, err := fmt.Sscanf(val, "%d", &p)

|

||||

if err != nil {

|

||||

return 0, fmt.Errorf("invalid uint16 value: %s", val)

|

||||

}

|

||||

return p, nil

|

||||

}

|

||||

162

client/cmd/set_test.go

Normal file

162

client/cmd/set_test.go

Normal file

@@ -0,0 +1,162 @@

|

||||

package cmd

|

||||

|

||||

import (

|

||||

"os"

|

||||

"strings"

|

||||

"testing"

|

||||

"time"

|

||||

|

||||

"github.com/netbirdio/netbird/client/internal"

|

||||

"github.com/stretchr/testify/require"

|

||||

)

|

||||

|

||||

func TestSetCommand_AllSettings(t *testing.T) {

|

||||

tempFile, err := os.CreateTemp("", "config.json")

|

||||

require.NoError(t, err)

|

||||

defer os.Remove(tempFile.Name())

|

||||

|

||||

// Write empty JSON object to the config file to avoid JSON parse errors

|

||||

_, err = tempFile.WriteString("{}")

|

||||

require.NoError(t, err)

|

||||

tempFile.Close()

|

||||

|

||||

configPath = tempFile.Name()

|

||||

|

||||

tests := []struct {

|

||||

setting string

|

||||

value string

|

||||

verify func(*testing.T, *internal.Config)

|

||||

wantErr bool

|

||||

}{

|

||||

{"management-url", "https://test.mgmt:443", func(t *testing.T, c *internal.Config) {

|

||||

require.Equal(t, "https://test.mgmt:443", c.ManagementURL.String())

|

||||

}, false},

|

||||

{"admin-url", "https://test.admin:443", func(t *testing.T, c *internal.Config) {

|

||||

require.Equal(t, "https://test.admin:443", c.AdminURL.String())

|

||||

}, false},

|

||||

{"interface-name", "utun99", func(t *testing.T, c *internal.Config) {

|

||||

require.Equal(t, "utun99", c.WgIface)

|

||||

}, false},

|

||||

{"external-ip-map", "12.34.56.78,12.34.56.79", func(t *testing.T, c *internal.Config) {

|

||||

require.Equal(t, []string{"12.34.56.78", "12.34.56.79"}, c.NATExternalIPs)

|

||||

}, false},

|

||||

{"extra-iface-blacklist", "eth1,eth2", func(t *testing.T, c *internal.Config) {

|

||||

require.Contains(t, c.IFaceBlackList, "eth1")

|

||||

require.Contains(t, c.IFaceBlackList, "eth2")

|

||||

}, false},

|

||||

{"dns-resolver-address", "127.0.0.1:5053", func(t *testing.T, c *internal.Config) {

|

||||

require.Equal(t, "127.0.0.1:5053", c.CustomDNSAddress)

|

||||

}, false},

|

||||

{"extra-dns-labels", "vpc1,mgmt1", func(t *testing.T, c *internal.Config) {

|

||||

require.True(t, strings.Contains(c.DNSLabels.SafeString(), "vpc1"))

|

||||

require.True(t, strings.Contains(c.DNSLabels.SafeString(), "mgmt1"))

|

||||

}, false},

|

||||

{"preshared-key", "testkey", func(t *testing.T, c *internal.Config) {

|

||||

require.Equal(t, "testkey", c.PreSharedKey)

|

||||

}, false},

|

||||

{"enable-rosenpass", "true", func(t *testing.T, c *internal.Config) {

|

||||

require.True(t, c.RosenpassEnabled)

|

||||

}, false},

|

||||

{"rosenpass-permissive", "false", func(t *testing.T, c *internal.Config) {

|

||||

require.False(t, c.RosenpassPermissive)

|

||||

}, false},

|

||||

{"allow-server-ssh", "true", func(t *testing.T, c *internal.Config) {

|

||||

require.NotNil(t, c.ServerSSHAllowed)

|

||||

require.True(t, *c.ServerSSHAllowed)

|

||||

}, false},

|

||||

{"network-monitor", "false", func(t *testing.T, c *internal.Config) {

|

||||

require.NotNil(t, c.NetworkMonitor)

|

||||

require.False(t, *c.NetworkMonitor)

|

||||

}, false},

|

||||

{"disable-auto-connect", "true", func(t *testing.T, c *internal.Config) {

|

||||

require.True(t, c.DisableAutoConnect)

|

||||

}, false},

|

||||

{"disable-client-routes", "false", func(t *testing.T, c *internal.Config) {

|

||||

require.False(t, c.DisableClientRoutes)

|

||||

}, false},

|

||||

{"disable-server-routes", "true", func(t *testing.T, c *internal.Config) {

|

||||

require.True(t, c.DisableServerRoutes)

|

||||

}, false},

|

||||

{"disable-dns", "false", func(t *testing.T, c *internal.Config) {

|

||||

require.False(t, c.DisableDNS)

|

||||

}, false},

|

||||

{"disable-firewall", "true", func(t *testing.T, c *internal.Config) {

|

||||

require.True(t, c.DisableFirewall)

|

||||

}, false},

|

||||

{"block-lan-access", "true", func(t *testing.T, c *internal.Config) {

|

||||

require.True(t, c.BlockLANAccess)

|

||||

}, false},

|

||||

{"block-inbound", "false", func(t *testing.T, c *internal.Config) {

|

||||

require.False(t, c.BlockInbound)

|

||||

}, false},

|

||||

{"enable-lazy-connection", "true", func(t *testing.T, c *internal.Config) {

|

||||

require.True(t, c.LazyConnectionEnabled)

|

||||

}, false},

|

||||

{"wireguard-port", "51820", func(t *testing.T, c *internal.Config) {

|

||||

require.Equal(t, 51820, c.WgPort)

|

||||

}, false},

|

||||

{"dns-router-interval", "2m", func(t *testing.T, c *internal.Config) {

|

||||

require.Equal(t, 2*time.Minute, c.DNSRouteInterval)

|

||||

}, false},

|

||||

// Invalid cases

|

||||

{"enable-rosenpass", "notabool", nil, true},

|

||||

{"wireguard-port", "notanint", nil, true},

|

||||

{"dns-router-interval", "notaduration", nil, true},

|

||||

}

|

||||

|

||||

for _, tt := range tests {

|

||||

t.Run(tt.setting+"="+tt.value, func(t *testing.T) {

|

||||

args := []string{tt.setting, tt.value}

|

||||

err := setFunc(nil, args)

|

||||

if tt.wantErr {

|

||||

require.Error(t, err)

|

||||

return

|

||||

}

|

||||

require.NoError(t, err)

|

||||

config, err := internal.ReadConfig(configPath)

|

||||

require.NoError(t, err)

|

||||

if tt.verify != nil {

|

||||

tt.verify(t, config)

|

||||

}

|

||||

})

|

||||

}

|

||||

}

|

||||

|

||||

func TestSetCommand_EnvVars(t *testing.T) {

|

||||

tempFile, err := os.CreateTemp("", "config.json")

|

||||

require.NoError(t, err)

|

||||

defer os.Remove(tempFile.Name())

|

||||

|

||||

// Write empty JSON object to the config file to avoid JSON parse errors

|

||||

_, err = tempFile.WriteString("{}")

|

||||

require.NoError(t, err)

|

||||

tempFile.Close()

|

||||

|

||||

configPath = tempFile.Name()

|

||||

|

||||

os.Setenv("NB_INTERFACE_NAME", "utun77")

|

||||

defer os.Unsetenv("NB_INTERFACE_NAME")

|

||||

args := []string{"interface-name", "utun99"}

|

||||

err = setFunc(nil, args)

|

||||

require.NoError(t, err)

|

||||

config, err := internal.ReadConfig(configPath)

|

||||

require.NoError(t, err)

|

||||

require.Equal(t, "utun77", config.WgIface)

|

||||

|

||||

os.Unsetenv("NB_INTERFACE_NAME")

|

||||

os.Setenv("WT_INTERFACE_NAME", "utun88")

|

||||

defer os.Unsetenv("WT_INTERFACE_NAME")

|

||||

err = setFunc(nil, args)

|

||||

require.NoError(t, err)

|

||||

config, err = internal.ReadConfig(configPath)

|

||||

require.NoError(t, err)

|

||||

require.Equal(t, "utun88", config.WgIface)

|

||||

|

||||

os.Unsetenv("WT_INTERFACE_NAME")

|

||||

// No env var, should use CLI value

|

||||

err = setFunc(nil, args)

|

||||

require.NoError(t, err)

|

||||

config, err = internal.ReadConfig(configPath)

|

||||

require.NoError(t, err)

|

||||

require.Equal(t, "utun99", config.WgIface)

|

||||

}

|

||||

@@ -26,7 +26,6 @@ var (

|

||||

statusFilter string

|

||||

ipsFilterMap map[string]struct{}

|

||||

prefixNamesFilterMap map[string]struct{}

|

||||

connectionTypeFilter string

|

||||

)

|

||||

|

||||

var statusCmd = &cobra.Command{

|

||||

@@ -46,7 +45,6 @@ func init() {

|

||||

statusCmd.PersistentFlags().StringSliceVar(&ipsFilter, "filter-by-ips", []string{}, "filters the detailed output by a list of one or more IPs, e.g., --filter-by-ips 100.64.0.100,100.64.0.200")

|

||||

statusCmd.PersistentFlags().StringSliceVar(&prefixNamesFilter, "filter-by-names", []string{}, "filters the detailed output by a list of one or more peer FQDN or hostnames, e.g., --filter-by-names peer-a,peer-b.netbird.cloud")

|

||||

statusCmd.PersistentFlags().StringVar(&statusFilter, "filter-by-status", "", "filters the detailed output by connection status(idle|connecting|connected), e.g., --filter-by-status connected")

|

||||

statusCmd.PersistentFlags().StringVar(&connectionTypeFilter, "filter-by-connection-type", "", "filters the detailed output by connection type (P2P|Relayed), e.g., --filter-by-connection-type P2P")

|

||||

}

|

||||

|

||||

func statusFunc(cmd *cobra.Command, args []string) error {

|

||||

@@ -91,7 +89,7 @@ func statusFunc(cmd *cobra.Command, args []string) error {

|

||||

return nil

|

||||

}

|

||||

|

||||

var outputInformationHolder = nbstatus.ConvertToStatusOutputOverview(resp, anonymizeFlag, statusFilter, prefixNamesFilter, prefixNamesFilterMap, ipsFilterMap, connectionTypeFilter)

|

||||

var outputInformationHolder = nbstatus.ConvertToStatusOutputOverview(resp, anonymizeFlag, statusFilter, prefixNamesFilter, prefixNamesFilterMap, ipsFilterMap)

|

||||

var statusOutputString string

|

||||

switch {

|

||||

case detailFlag:

|

||||

@@ -158,15 +156,6 @@ func parseFilters() error {

|

||||

enableDetailFlagWhenFilterFlag()

|

||||

}

|

||||

|

||||

switch strings.ToLower(connectionTypeFilter) {

|

||||

case "", "p2p", "relayed":

|

||||

if strings.ToLower(connectionTypeFilter) != "" {

|

||||

enableDetailFlagWhenFilterFlag()

|

||||

}

|

||||

default:

|

||||

return fmt.Errorf("wrong connection-type filter, should be one of P2P|Relayed, got: %s", connectionTypeFilter)

|

||||

}

|

||||

|

||||

return nil

|

||||

}

|

||||

|

||||

|

||||

@@ -109,7 +109,7 @@ func startManagement(t *testing.T, config *types.Config, testFile string) (*grpc

|

||||

}

|

||||

|

||||

secretsManager := mgmt.NewTimeBasedAuthSecretsManager(peersUpdateManager, config.TURNConfig, config.Relay, settingsMockManager)

|

||||

mgmtServer, err := mgmt.NewServer(context.Background(), config, accountManager, settingsMockManager, peersUpdateManager, secretsManager, nil, nil, nil, &mgmt.MockIntegratedValidator{})

|

||||

mgmtServer, err := mgmt.NewServer(context.Background(), config, accountManager, settingsMockManager, peersUpdateManager, secretsManager, nil, nil, nil)

|

||||

if err != nil {

|

||||

t.Fatal(err)

|

||||

}

|

||||

|

||||

@@ -1,408 +0,0 @@

|

||||

package uspfilter

|

||||

|

||||

import (

|

||||

"encoding/binary"

|

||||

"errors"

|

||||

"fmt"

|

||||

"net/netip"

|

||||

|

||||

"github.com/google/gopacket/layers"

|

||||

|

||||

firewall "github.com/netbirdio/netbird/client/firewall/manager"

|

||||

)

|

||||

|

||||

var ErrIPv4Only = errors.New("only IPv4 is supported for DNAT")

|

||||

|

||||

func ipv4Checksum(header []byte) uint16 {

|

||||

if len(header) < 20 {

|

||||

return 0

|

||||

}

|

||||

|

||||

var sum1, sum2 uint32

|

||||

|

||||

// Parallel processing - unroll and compute two sums simultaneously

|

||||

sum1 += uint32(binary.BigEndian.Uint16(header[0:2]))

|

||||

sum2 += uint32(binary.BigEndian.Uint16(header[2:4]))

|

||||

sum1 += uint32(binary.BigEndian.Uint16(header[4:6]))

|

||||

sum2 += uint32(binary.BigEndian.Uint16(header[6:8]))

|

||||

sum1 += uint32(binary.BigEndian.Uint16(header[8:10]))

|

||||

// Skip checksum field at [10:12]

|

||||

sum2 += uint32(binary.BigEndian.Uint16(header[12:14]))

|

||||

sum1 += uint32(binary.BigEndian.Uint16(header[14:16]))

|

||||

sum2 += uint32(binary.BigEndian.Uint16(header[16:18]))

|

||||

sum1 += uint32(binary.BigEndian.Uint16(header[18:20]))

|

||||

|

||||

sum := sum1 + sum2

|

||||

|

||||

// Handle remaining bytes for headers > 20 bytes

|

||||

for i := 20; i < len(header)-1; i += 2 {

|

||||

sum += uint32(binary.BigEndian.Uint16(header[i : i+2]))

|

||||

}

|

||||

|

||||

if len(header)%2 == 1 {

|

||||

sum += uint32(header[len(header)-1]) << 8

|

||||

}

|

||||

|

||||

// Optimized carry fold - single iteration handles most cases

|

||||

sum = (sum & 0xFFFF) + (sum >> 16)

|

||||

if sum > 0xFFFF {

|

||||

sum++

|

||||

}

|

||||

|

||||

return ^uint16(sum)

|

||||

}

|

||||

|

||||

func icmpChecksum(data []byte) uint16 {

|

||||

var sum1, sum2, sum3, sum4 uint32

|

||||

i := 0

|

||||

|

||||

// Process 16 bytes at once with 4 parallel accumulators

|

||||

for i <= len(data)-16 {

|

||||

sum1 += uint32(binary.BigEndian.Uint16(data[i : i+2]))

|

||||

sum2 += uint32(binary.BigEndian.Uint16(data[i+2 : i+4]))

|

||||

sum3 += uint32(binary.BigEndian.Uint16(data[i+4 : i+6]))

|

||||

sum4 += uint32(binary.BigEndian.Uint16(data[i+6 : i+8]))

|

||||

sum1 += uint32(binary.BigEndian.Uint16(data[i+8 : i+10]))

|

||||

sum2 += uint32(binary.BigEndian.Uint16(data[i+10 : i+12]))

|

||||

sum3 += uint32(binary.BigEndian.Uint16(data[i+12 : i+14]))

|

||||

sum4 += uint32(binary.BigEndian.Uint16(data[i+14 : i+16]))

|

||||

i += 16

|

||||

}

|

||||

|

||||

sum := sum1 + sum2 + sum3 + sum4

|

||||

|

||||

// Handle remaining bytes

|

||||

for i < len(data)-1 {

|

||||

sum += uint32(binary.BigEndian.Uint16(data[i : i+2]))

|

||||

i += 2

|

||||

}

|

||||

|

||||

if len(data)%2 == 1 {

|

||||

sum += uint32(data[len(data)-1]) << 8

|

||||

}

|

||||

|

||||

sum = (sum & 0xFFFF) + (sum >> 16)

|

||||

if sum > 0xFFFF {

|

||||

sum++

|

||||

}

|

||||

|

||||

return ^uint16(sum)

|

||||

}

|

||||

|

||||

type biDNATMap struct {

|

||||

forward map[netip.Addr]netip.Addr

|

||||

reverse map[netip.Addr]netip.Addr

|

||||

}

|

||||

|

||||

func newBiDNATMap() *biDNATMap {

|

||||

return &biDNATMap{

|

||||

forward: make(map[netip.Addr]netip.Addr),

|

||||

reverse: make(map[netip.Addr]netip.Addr),

|

||||

}

|

||||

}

|

||||

|

||||

func (b *biDNATMap) set(original, translated netip.Addr) {

|

||||

b.forward[original] = translated

|

||||

b.reverse[translated] = original

|

||||

}

|

||||

|

||||

func (b *biDNATMap) delete(original netip.Addr) {

|

||||

if translated, exists := b.forward[original]; exists {

|

||||

delete(b.forward, original)

|

||||

delete(b.reverse, translated)

|

||||

}

|

||||

}

|

||||

|

||||

func (b *biDNATMap) getTranslated(original netip.Addr) (netip.Addr, bool) {

|

||||

translated, exists := b.forward[original]

|

||||

return translated, exists

|

||||

}

|

||||

|

||||